About microbatch incremental models

The microbatch strategy is available in dbt Cloud Versionless and dbt Core v1.9.

Read and participate in the discussion: dbt-core#10672

What is microbatch?

Incremental models in dbt are a materialization designed to efficiently update your data warehouse tables by only transforming and loading new or changed data since the last run. Instead of reprocessing an entire dataset every time, incremental models append, update, or replace rows in the existing table with the new data just processed. This can significantly reduce the time and resources required for your data transformations.

Microbatch incremental models make it possible to process transformations on large time-series datasets with efficiency and resiliency. When dbt runs a microbatch model — whether for the first time, during incremental runs, or during manual backfills — it will split the processing into multiple queries (or "batches"), based on the event_time column you configure.

Where other incremental strategies operate only on "old" and "new" data, microbatch models treat each "batch" of data as a unit that can be built or replaced on its own. Each batch is independent and idempotent. This is a powerful abstraction that makes it possible for dbt to run batches separately — in the future, concurrently — and to retry them independently.

Available configs

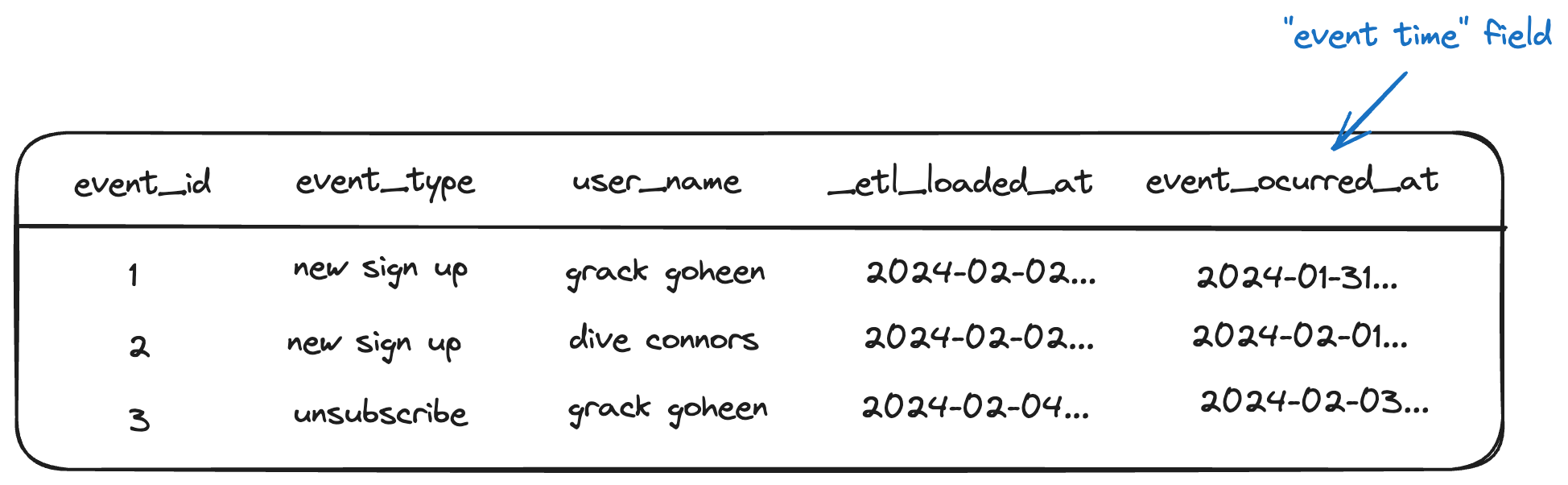

event_time- The column indicating "at what time did the row occur" (for both your microbatch model and its direct parents)

batch_size(string, optional) - The granularity of your batches. The default isday, and currently that is the only granularity supported.lookback(integer, optional) - Process X batches prior to the latest bookmark, in order to capture late-arriving records. The default value is0.begin(date, optional) - The "beginning of time" for your data. This is the starting point for any initial or full-refresh builds. For example, a daily-grain microbatch model run on2024-10-01withbegin = '2023-10-01will process 366 batches. (It's a leap year!)

As a best practice, we recommend configuring full_refresh: False on microbatch models so that they ignore invocations with the --full-refresh flag. If you need to reprocess historical data, do so with a targeted backfill.

Usage

You write your model query to process (read and return) one day of data. You don’t need to think about is_incremental filtering or DML (upserting/merging/replacing) - we take care of that for you.

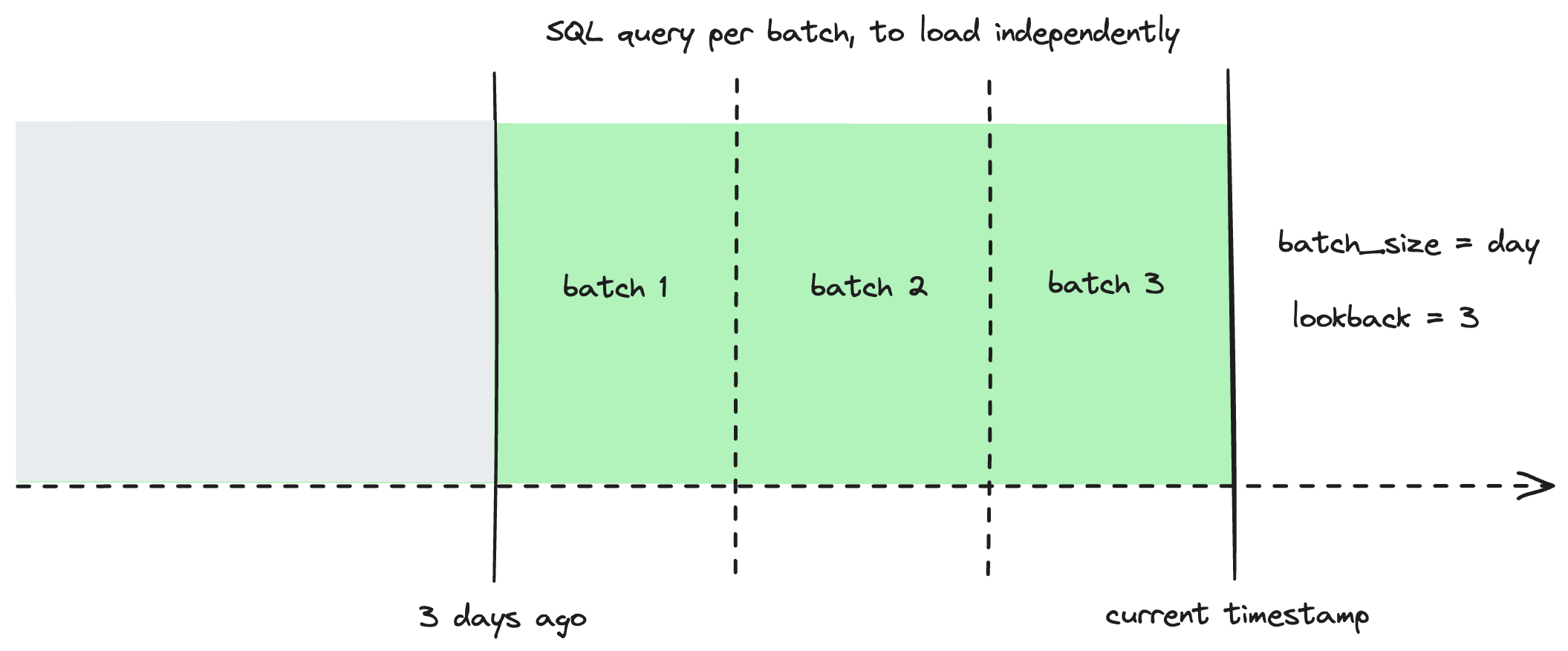

dbt will then evaluate which batches need to be loaded, break them up into a SQL query per batch, and load each one independently.

dbt will automatically filter upstream inputs (source or ref) that define event_time, based on the lookback and batch_size configs for this model.

During standard incremental runs, dbt will process new batches and any according to the configured lookback (with one query per batch)

If there’s an upstream model that configures event_time, but you don’t want the reference to it to be filtered, you can specify ref('upstream_model').render() to opt-out of auto-filtering.

dbt will evaluate which batches need to be loaded by processing the current batch (current_timestamp) + any batches in your configured lookback, break them up into a SQL query per batch, and load them all independently.

Backfills

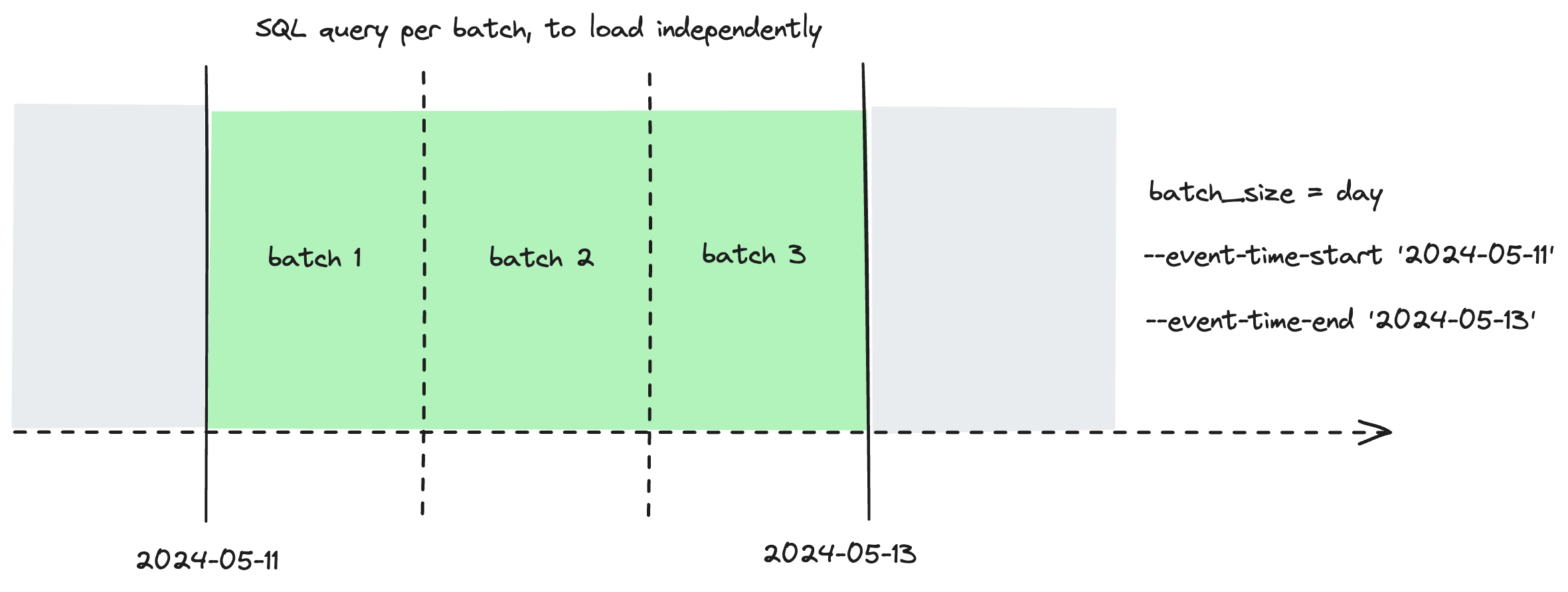

Whether to fix erroneous source data, or retroactively apply a change in business logic, you may need to reprocess a large amount of historical data.

Backfilling a microbatch model is as simple as selecting it to run or build, and specifying a "start" and "end" for event_time. As always, dbt will process the batches between the start and end as independent queries.

dbt run --event-time-start "2024-09-01" --event-time-end "2024-09-04"

Retry

If one or more of your batches fail, you can use dbt retry to reprocess only the failed batches.

Timezones

For now, dbt assumes that all values supplied are in UTC:

event_timebegin--event-time-startand--event-time-end

While we may consider adding support for custom timezones in the future, we also believe that defining these values in UTC makes everyone's lives easier.

How does microbatch compare to other incremental strategies?

Most incremental models rely on the end user (you) to explicitly tell dbt what "new" means, in the context of each model, by writing a filter in an is_incremental() block. You are responsibly for crafting this SQL in a way that queries this to check when the most recent record was last loaded, with an optional look-back window for late-arriving records. Other incremental strategies will control how the data is being added into the table — whether append-only insert, delete + insert, merge, insert overwrite, etc — but they all have this in common.

As an example:

{{

config(

materialized='incremental',

incremental_strategy='delete+insert',

unique_key='date_day'

)

}}

select * from {{ ref('stg_events') }}

{% if is_incremental() %}

-- this filter will only be applied on an incremental run

-- add a lookback window of 3 days to account for late-arriving records

where date_day >= (select {{ dbt.dateadd("day", -3, "max(date_day)") }} from {{ this }})

{% endif %}

For this incremental model:

- "New" records are those with a

date_daygreater than the maximumdate_daythat has previously been loaded - The lookback window is 3 days

- When there are new records for a given

date_day, the existing data fordate_dayis deleted and the new data is inserted

Let’s take our same example from before, and instead use the new microbatch incremental strategy:

{{

config(

materialized='incremental',

incremental_strategy='microbatch',

event_time='event_occured_at',

batch_size='day',

lookback=3,

begin='2020-01-01',

full_refresh=false

)

}}

select * from {{ ref('stg_events') }} # this ref will be auto-filtered

Where you’ve also set an event_time for the model’s direct parents - in this case stg_events:

models:

- name: stg_events

config:

event_time: my_time_field

And that’s it! When you run the model, each batch templates a separate query. The batch for 2024-10-01 would template:

select * from (

select * from {{ ref('stg_events') }}

where my_time_field >= '2024-10-01 00:00:00'

and my_time_field < '2024-10-02 00:00:00'

) # this ref will be auto-filtered